We

Make AI

Work

Vespa.ai is an AI-powered search platform for developing and operating large-scale applications that combine big data, vector search, machine-learned ranking, and real-time inference. With native tensor support for complex ranking and decisioning, Vespa enables real-time AI applications like RAG, recommendation, and intelligent search—at enterprise scale.

News

-

+ Search

Vespa is the world’s leading open text search engine and the world’s most capable vector database. In combination with Vespa’s integrated distributed machine-learned model inference for relevance this lets you create search applications with a quality you simply cannot achieve in any other way.

-

+ Generative AI (RAG)

GenAI applications are only as good as the data we surface for them to work with; they need great search relevance. This takes much more than vector similarity—hybrid search, relevance models, and multi-vector representations. Vespa is the only platform which lets you deploy such techniques with no limitations and at any scale.

-

+ Recommendation and personalization

Recommendation, personalization and ad targeting systems combine retrieval of eligible content with machine-learned model evaluation to select the best data items. Vespa lets you easily build applications that do this at any scale and complexity.

-

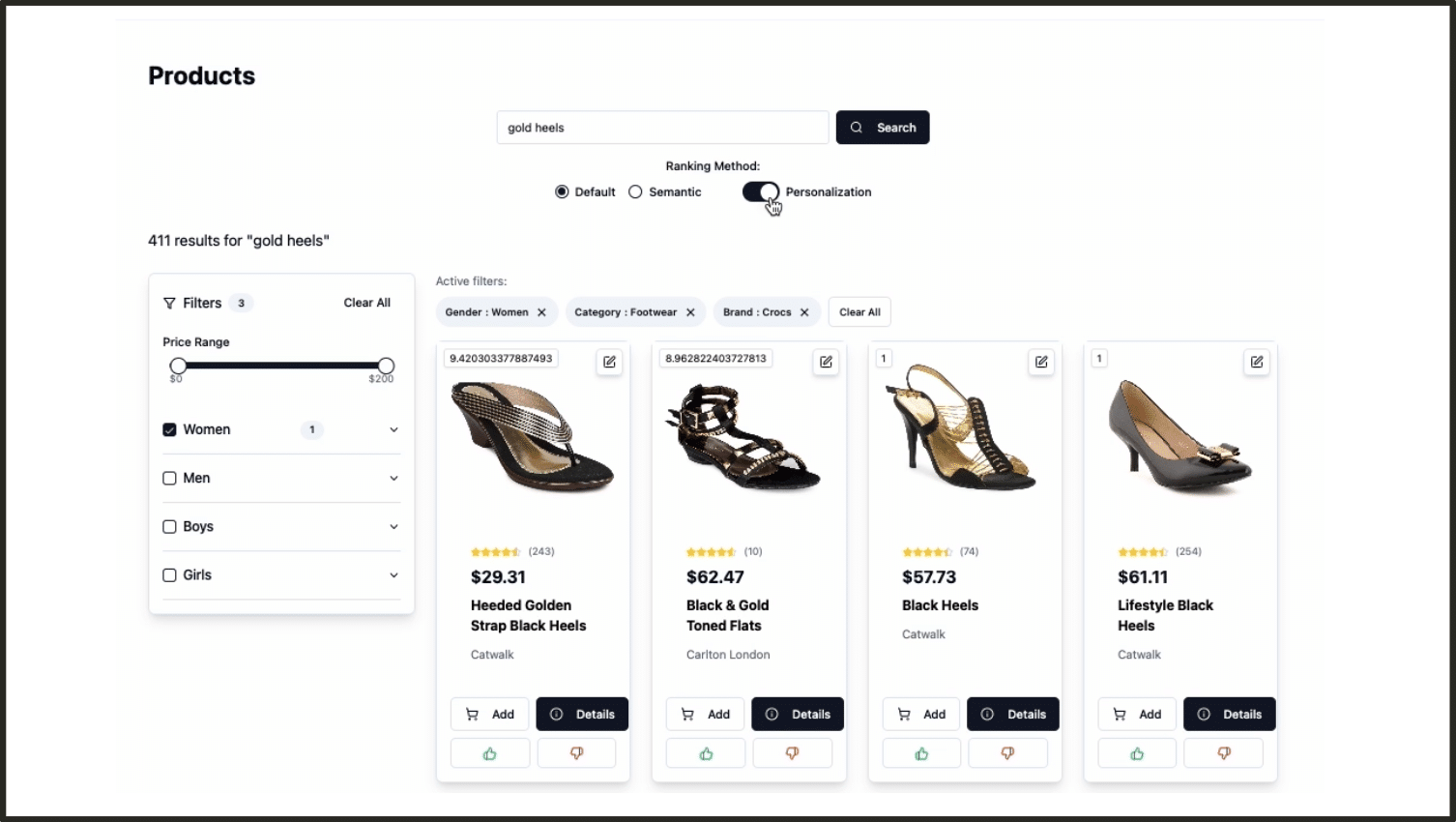

+ Semi-structured navigation

Applications like e-commerce use a combination of structured data and text+images, and need to combine search and recommendation seamlessly with structured navigation. Vespa provides all the features required to do this with great performance, at any scale.

-

+ Personal/private search

In applications working with personal data, any query will only access a small fraction of the total data, and building indexes would be wasteful – especially with vectors. Vespa provides a special mode – streaming search – which delivers all the industry-leading features of Vespa for personal/private search 20x cheaper than with indexing.

“As a reliable and scalable solution, Vespa has been instrumental in enabling Search at Spotify. We look forward to continuing our work with the Vespa team, and enabling innovation that will enhance the experience for Spotify listeners.”

Daniel Doro,

Director of Engineering, Search

“Vespa is a battle-tested platform that allows us to integrate keyword and vector search seamlessly. It forms a key part of our AI research solution, guaranteeing both precision and rapidity in streamlining research processes. We highly recommend Vespa for its reliability and efficiency.”

Jungwon Byun

COO & Cofounder

“Vespa has been a critical component to Yahoo’s AI and machine learning capabilities across all of our properties for many years”

Jim Lanzone,

CEO

“Our team successfully implemented the entire recommendation process of one algorithm with Vespa, matching the latency requirements (provide recommendations under 100ms) and scalability needs.”

Ricardo Rossi Tegão

Machine Learning Engineer